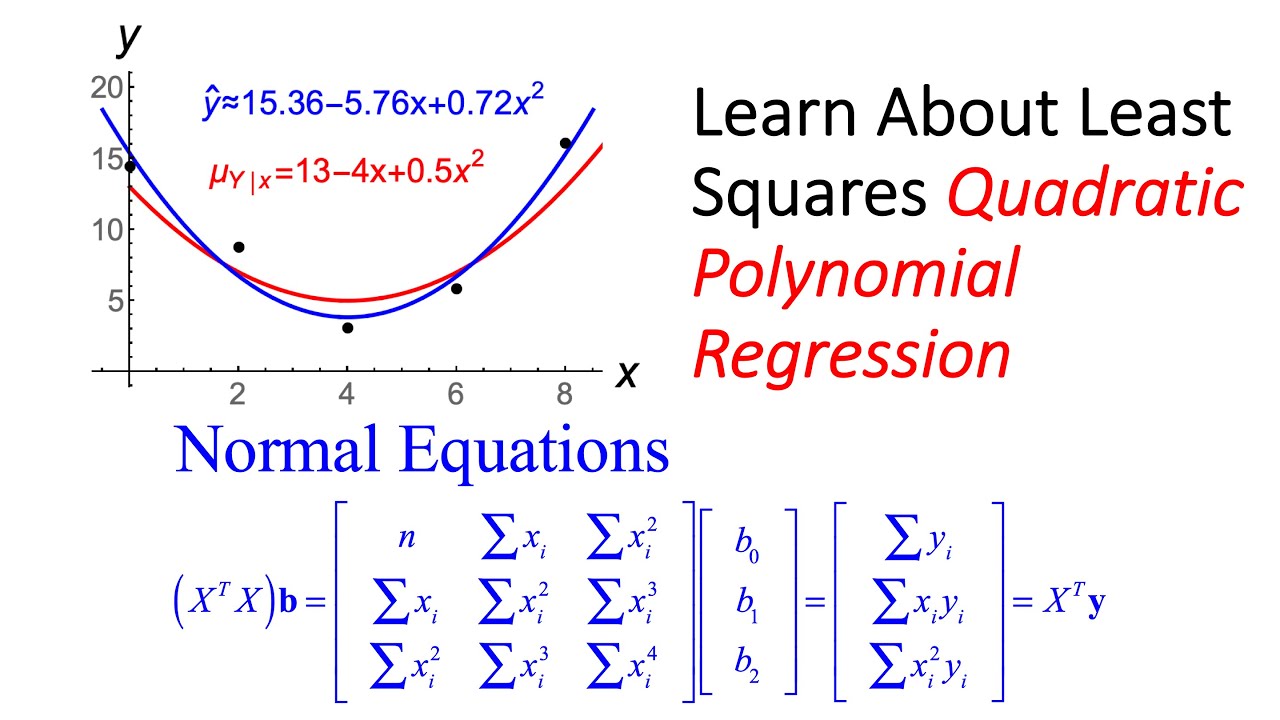

You shouldn't test the null hypothesis of no association for non-independent data, such as many time series. I'm not aware of any simulation studies on this, however.Ĭurvilinear regression also assumes that the data points are independent, just as linear regression does. Since linear regression is robust to these assumptions (violating them doesn't increase your chance of a false positive very much), I'm guessing that curvilinear regression may not be sensitive to violations of normality or homoscedasticity either. If you are testing the null hypothesis that there is no association between the two measurement variables, curvilinear regression assumes that the \(Y\) variable is normally distributed and homoscedastic for each value of \(X\). You can fit higher-order polynomial equations, but it is very unlikely that you would want to use anything more than the cubic in biology. A cubic equation has the formĪnd produces an S-shaped curve, while a quartic equation has the formĪnd can produce \(M\) or \(W\) shaped curves. Where \(a\) is the \(y\)–intercept and \(b_1\) and \(b_2\) are constants. One polynomial equation is a quadratic equation, which has the form A polynomial equation is any equation that has \(X\) raised to integer powers such as \(X^2\) and \(X^3\). Here I will use polynomial regression as one example of curvilinear regression, then briefly mention a few other equations that are commonly used in biology. For any particular form of equation involving such terms, you can find the equation for the curved line that best fits the data points, and compare the fit of the more complicated equation to that of a simpler equation (such as the equation for a straight line). There are a lot of equations that will produce curved lines, including exponential (involving \(b^x\), where \(b\) is a constant), power (involving \(X^b\)), logarithmic (involving\(\log (X)\)), and trigonometric (involving sine, cosine, or other trigonometric functions). Your third option is curvilinear regression: finding an equation that produces a curved line that fits your points. The second null hypothesis of curvilinear regression is that the increase in \(R^2\) is only as large as you would expect by chance. A cubic equation will always have a higher \(R^2\) than quadratic, and so on. So the quadratic equation will always have a higher \(R^2\) than the linear. Will always be closer to the points than a linear equation of the form

As you add more parameters to an equation, it will always fit the data better for example, a quadratic equation of the form You measure the fit of an equation to the data with \(R^2\), analogous to the \(r^2\) of linear regression. This is analogous to testing the null hypothesis that the slope is \(0\) in a linear regression.

One null hypothesis you can test when doing curvilinear regression is that there is no relationship between the \(X\) and \(Y\) variables in other words, that knowing the value of \(X\) would not help you predict the value of \(Y\). And if you want to use the regression equation for prediction or you're interested in the strength of the relationship (\(r^2\)), you should definitely not use linear regression and correlation when the relationship is curved. However, it will look strange if you use linear regression and correlation on a relationship that is strongly curved, and some curved relationships, such as a U-shape, can give a non-significant \(P\) value even when the fit to a U-shaped curve is quite good. This could be acceptable if the line is just slightly curved if your biological question is "Does more \(X\) cause more \(Y\)?", you may not care whether a straight line or a curved line fits the relationship between \(X\) and \(Y\) better. If you only want to know whether there is an association between the two variables, and you're not interested in the line that fits the points, you can use the \(P\) value from linear regression and correlation. You have three choices in this situation. In that case, the linear regression line will not be very good for describing and predicting the relationship, and the \(P\) value may not be an accurate test of the null hypothesis that the variables are not associated. Sometimes, when you analyze data with correlation and linear regression, you notice that the relationship between the independent (\(X\)) variable and dependent (\(Y\)) variable looks like it follows a curved line, not a straight line.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed